|

11/4/2023 0 Comments Image tuner file

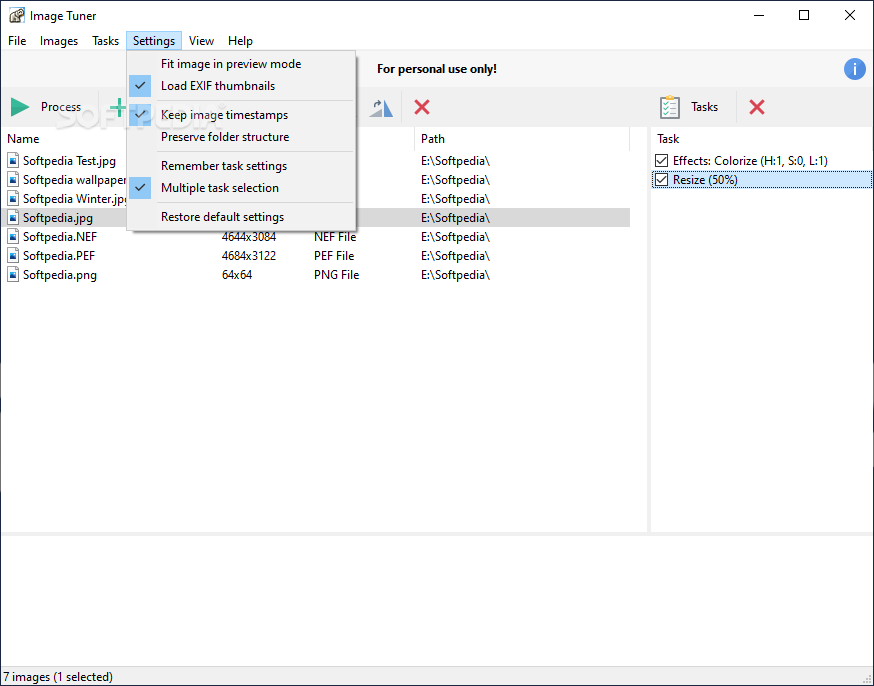

With Image Tuner, you can easily resize, rename, watermark, and convert images in batch mode, and it uses a fast image resizing algorithm to help speed up the entire process. The current working directory of both functional and class trainables is set to theĬorresponding trial directory once it’s been launched as a remote Ray actor.Image Tuner is a program for batch resizing, converting, watermarking, and renaming your digital photos/images from/to JPEG, BMP, PNG, TIFF, and GIF formats. Should be configured to log to the Trainable’s working directory. Note that logging_t_log_path(os.getcwd()) is an imaginary API that we are usingįor demonstation purposes, and it highlights that the third-party library In the code snippet above, logging_library refers to whatever 3rd party logging library you are using. with open ( f "./artifact_ step = result logging_library. # The working directory is set to the trial directory, so # you don't need to worry about multiple workers saving # to the same location. ) # You can also write to a file directly. getcwd ()) def step ( self ): logging_library. init ( name = trial_id, id = trial_id, resume = trial_id, reinit = True, allow_val_change = True ) logging_library. Trainable ) def setup ( self, config ): trial_id = self.

Import logging_library # ex: mlflow, wandb from ray import tune class CustomLogging ( tune. You can save trial artifacts directly in the trainable, as shown below: We refer to these saved files as trial artifacts. Or use a custom logging library that requires multi-process logging.įor example, you may want to do this if you’re trying to log images to TensorBoard. However, you may want to save a file that visualizes the model weights or model graph, How do you log arbitrary files from a Tune Trainable? #īy default, Tune only logs the training result dictionaries and checkpoints from your Trainable. Preferred way to interact with logging that happens in trainables. We know that logging and observability can be a huge performance boost for your workflow. If this impacts your workflow, consider commenting on We may prioritize enabling this if there are enough user requests. This can happen with some schedulers or with node failures. This can cause problems when the trainable is moved across different nodes throughout its lifetime. To trial_logdir/stdout and trial_logdir/stderr, respectively: This applies to print statements, warnings.warn and etc.īy passing log_to_file=True to air.RunConfig, which is taken in by Tuner, stdout and stderr will be logged However, if you wish to collect Trainable logs in files for analysis, Tune offers the option Logging that happens within Tune Trainables follows this handling by default. The head process (see ray worker logs for more information). By default, Ray collects actors’ stdout and stderr and prints them to In Tune, Trainables are run as remote actors. How to redirect Trainable logs to files in a Tune run? # Result logdir: /Users/foo/ray_results/myexp

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed